Is China using deepfakes to fool the U.S. military?

10/01/2019 / By Ralph Flores

Deepfakes, which come from the words “deep (learning)” and “fakes,” have sparked privacy rows recently, and for good reason. The technology behind making fake images is slowly gaining ground — with reports saying that “perfectly real” deepfake images and videos are just months away from being accessible to everyone.

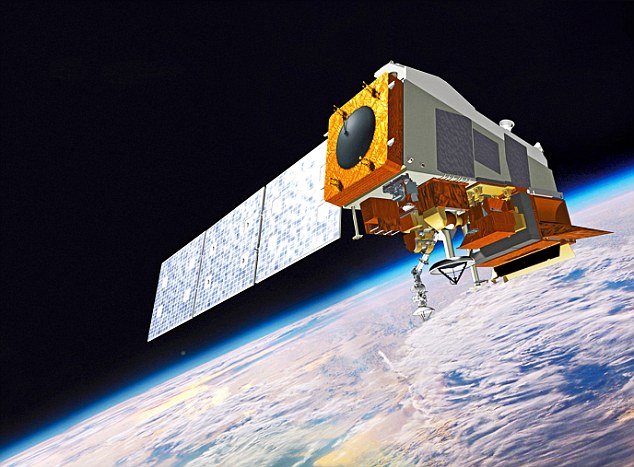

While the ramification of this technology is a topic ripe for debate, one country is already making strides when it comes to developing deepfakes. A recent report by the National Geospatial-Intelligence Agency, an intelligence and combat support agency under the Department of Defense, revealed that China has created a program that involves using artificial intelligence and deepfakes. In the report, NGA automation lead Todd Myers explained how this emerging technique — known as generative adversarial networks or GANs — can trick U.S. satellites into seeing fake images.

“The Chinese have already designed…to manipulate scenes and pixels to create things for nefarious reasons,” explained Myers at the recent Genius Machines summit on artificial intelligence in Arlington, Virginia.

When make-believe becomes real

An article by researchers from the University of Montreal explained how GANs use deep learning methods to make something of their own. While creativity is a mental faculty often associated with humans, GANs use past data and machine learning to create something unique of their own — the “generation” part of the network.

In simpler terms, GANs create data in the same manner that a person identifies words from a random string of letters: “PAPLE” can create the words pal, apple, or leap — depending on whether he has learned these words before being presented with the string.

The “adversarial” aspect of the network answers the problem presented by the initial scenario — whether the generated data (including images) is unique. To do that, GANs contain a network that compares the data it generated against real data. This should result in the two data streams being at odds with each other, but the network generates values to fool the discriminator into believing that it’s the real thing.

For instance, GANs may trick satellites into believing that a bridge exists at a certain location using image analysis — when there isn’t one in real life. According to Myers, this technology can greatly affect the U.S. military, especially since it heavily relies on satellite images and automated image analyses.

“From a tactical perspective or mission planning, you train your forces to go a certain route, toward a bridge, but it’s not there. Then there’s a big surprise waiting for you,” he added in a report to Defense One.

Fake images, real consequences

While the idea of weaponizing deepfakes, especially for the military, sounds like a sinister plot, it’s not the first time that it has happened. FaceApp, the viral app that allows users to see older versions of themselves, has been dogged by controversy, thanks to an unusual privacy policy that allows the makers of the app to use uploaded images for “commercial purposes.” In China, a deepfake app that puts a person’s face into movies and TV shows has recently drawn flak over its perceived threat to user privacy. Zao, which was developed by Chinese dating company Momo, has a privacy policy that explicitly states that all user-generated images get a “free, irrevocable, permanent, transferable, and relicense-able” license. The developers have responded to the backlash, saying that it won’t use images or videos beyond app improvements.

Aside from raising privacy concerns, experts have also raised alarm bells about its potential to be used for less-than-noble applications. In particular, one software used deepfake technology to superimpose the face of female celebrities in a porn film.

“At the most basic level, deepfakes are lies disguised to look like truth,” explained Andrea Hickerson, director for the University of South Carolina College of Information and Communications, in an article in Popular Mechanics.

“If we take them as truth or evidence, we can easily make false conclusions with potentially disastrous consequences.”

Sources include:

Tagged Under: adversarial network, artificial intelligence, badtech, China, computing, cyberwar, dangerous tech, data, deception, Deep Learning, deepfakes, FaceApp, fake data, fake images, future tech, GAN, generation network, generative adversarial networks, image analysis, information technology, lies, machine learning, military, military tech, national security, privacy, privacy policy, privacy watch, satellite image, satellite imagery, surveillance, US satellites, weapons tech, Zao

RECENT NEWS & ARTICLES

COPYRIGHT © 2018 MILITARYTECHNOLOGY.NEWS

All content posted on this site is protected under Free Speech. MilitaryTechnology.news is not responsible for content written by contributing authors. The information on this site is provided for educational and entertainment purposes only. It is not intended as a substitute for professional advice of any kind. MilitaryTechnology.news assumes no responsibility for the use or misuse of this material. All trademarks, registered trademarks and service marks mentioned on this site are the property of their respective owners.